AMD Announces Ryzen 7000 Reveal Livestream for August 29th

In a brief press release sent out this morning, AMD has announced that they will be delivering their eagerly anticipated Ryzen 7000 unveiling later this month as a live stream. In an event dubbed “together we advance_PCs”, AMD will be discussing the forthcoming Ryzen 7000 series processors as well as the underlying Zen 4 architecture and associated AM5 platform – laying the groundwork ahead of AMD’s planned fall launch for the Ryzen 7000 platform. The event is set to kick off on August 29th at 7pm ET (23:00 UTC), with CEO Dr. Lisa Su and CTO Mark Papermaster slated to present.

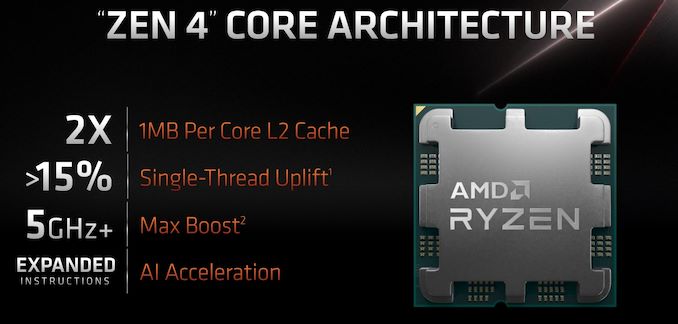

AMD first unveiled their Ryzen 7000 platform and branding back at Computex 2022, offering quite a few high-level details on the forthcoming consumer processor platform while stating it would be launching in the fall. The new CPU family will feature up to 16 Zen 4 cores using TSMC’s optimized 5 nm manufacturing process for the Core Complex Die (CCD), and TSMC’s 6nm process for the I/O Die (IOD). AMD has not disclosed a great deal about the Zen 4 architecture itself, though their Computex presentation has indicated we should expect a several percent increase in IPC, along with a further several percent increase in peak clockspeeds, allowing for a 15%+ increase in single-threaded performance.

The Ryzen 7000 series is also notable for being the first of AMD’s chiplet-based CPUs to integrate a GPU – in this case embedding it in the IOD. The modest GPU allows for AMD’s CPUs to supply their own graphics, eliminating the need for a discrete GPU just to boot a system while, we expect, providing enough performance for basic desktop work.

| AMD Desktop CPU Generations | |||

| AnandTech | Ryzen 7000 (Raphael) |

Ryzen 5000 (Vermeer) |

Ryzen 3000 (Matisse) |

| CPU Architecture | Zen 4 | Zen 3 | Zen 2 |

| CPU Cores | Up To 16C / 32T | Up To 16C / 32T | Up To 16C / 32T |

| GPU Architecture | RDNA2 | N/A | N/A |

| GPU Cores | TBD | N/A | N/A |

| Memory | DDR5 | DDR4 | DDR4 |

| Platform | AM5 | AM4 | AM4 |

| CPU PCIe Lanes | 24x PCIe 5.0 | 24x PCIe 4.0 | 24x PCIe 4.0 |

| Manufacturing Process | CCD: TSMC N5 IOD: TSMC N6 |

CCD: TSMC N7 IOD: GloFo 12nm |

CCD: TSMC N7 IOD: GloFo 12nm |

The new CPU family will also come with a new socket and motherboard platform, which AMD is dubbing AM5. The first significant socket update for AMD in six years will bring with it a slew of changes and new features, including a switch to an LGA-style socket (LGA1718) and support for DDR5 memory. Providing the back-end for AM5 will be AMD’s 600 series chipsets, with AMD set to release both enthusiast and mainstream chipsets. PCIe 5.0 will also be supported by the platform, but in the interest of keeping motherboard prices in check, it is not a mandatory motherboard feature.

The remaining major disclosures that AMD hasn’t made – and which we’re expecting to see at their next event – will be around the Zen 4 architecture itself, as well as information on specific Ryzen 7000 SKUs. Pricing information is likely not in the cards (the industry has developed a strong tendency to announce prices at the last minute), but at the very least we should have an idea of how many cores to expect on the various SKUs, as well as where the official TDPs will land in this generation given AM5’s greater power limits.

Meanwhile, AMD’s press release does not mention whether the presentation will be recorded or live. Like most tech companies, AMD switched to pre-recorded presentations due to the outbreak of COVID-19, which in turn has been paying dividends in the form of breezier and more focused presentations with higher production values. While relatively insignificant in the big picture of things, it will be interesting to see whether AMD is going back to live presentations for consumer product unveils such as this.

In any case, we’ll find out more during AMD’s broadcast. The presentation is slated to air on August 29th at 7pm Eastern, on AMD’s YouTube channel. And of course, be sure to check out AnandTech for a full rundown and analysis of AMD’s announcements.